Sliding Window

A great number of social network datasets have been, and are, collected through surveys and interviews. For example, an advice network could be collected by asking each individual within a group to designate the people they go to for advice. Another, more rigid, method is to give each individual a list of the other people in the group and let them select the people they go to for advice (roast surveys).

In addition to number of biases (e.g., the informant inaccuracy bias; Bernard et al., 1984; see my thesis for a critic), survey instruments and direct observation methods are generally labour-intensive and difficult to administer. As a result, most networks collected using these methods are of a fairly limited size, often comprising only a few tens (e.g., Bernard et al., 1988) or hundreds (e.g., Fararo and Sunshine, 1964) of people.

This limitation has been overcome by using archival data sources instead of surveys. For example, the online social network of 1,899 people used in Patterns and Dynamics of Users’ Behaviour and Interaction: Network Analysis of an Online Community could only reasonably be collected since the social interactions were automatically recorded. Other social network papers using archival data sources include Kossinets and Watts (2006) and Uzzi and Spiro (2005).

Although archival data sources allow for larger networks to be collected, and in turn, more robust statistical analysis to be applied, a bias might be introduced into the data if information about the severing of ties is not included: archival data sources have a much better memory than individuals.¹ For a social network, this could imply that social interactions that are no longer relevant to an individual are recorded as being relevant. Moreover, the weight of ties might be overestimated. These issues do not exist when data is collected through surveys as each individual would only list current or relevant friends with the current tie strength (if they are honest that is).

In the empirical analysis of the online social network, we studied the network in two ways. First, we assumed that social ties never decay (the cumulative perspective). This assumes that if a social interaction is recorded on, for example, day 12, it will become included in the analysis from that point, and it will always remain included. Second, we followed Kossinets and Watts (2006) and imposed lifespans to the social relationships. This ensured that, if two people do not continue to communicate over time, their tie will be severed. This also applied to the weighted network: if the rate of messages sent from one person to another decreases, the tie would be weakened.

In the empirical analysis of the online social network, we studied the network in two ways. First, we assumed that social ties never decay (the cumulative perspective). This assumes that if a social interaction is recorded on, for example, day 12, it will become included in the analysis from that point, and it will always remain included. Second, we followed Kossinets and Watts (2006) and imposed lifespans to the social relationships. This ensured that, if two people do not continue to communicate over time, their tie will be severed. This also applied to the weighted network: if the rate of messages sent from one person to another decreases, the tie would be weakened.

The length of the lifespan is crucial in determining which past events are taken into account to generate the network structure at a given point in time. By analysing which past events are relevant to the current state of the network, the length of the lifespan can be defined (Kossinets and Watts, 2006). An ill-defined lifespan will have the effect of, either breaking continuous social interactions into independent sets of interactions, or combining two separate interactions into a single one.

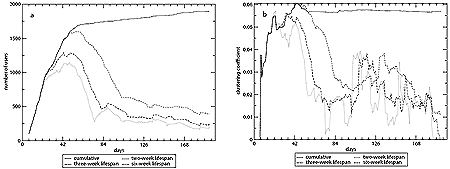

To illustrate the difference between imposing a lifespan and not imposing one, the following figure shows results from the the online social network where networks are constructed both cumulatively and with sliding windows of 2, 3, and 6 weeks. Both panels in the figure highlight the vulnerability of network measures to the use of a sliding window. Panel a suggests that there is only a small core of users that actively use the virtual community at the end of the observation period. An analysis of the cumulative network at that point would be heavily influenced by the majority of users that only used the network in the fi rst 6 weeks, and would not reflect the current activities that are occurring in the community. This could bias network measures and, ultimately, the analysis. Panel b shows the evolution of one possible measure, the clustering coefficient. In particular, the clustering coefficient measured on the active core is mostly below the value found in the cumulative network.

The above figure also highlights the sensitivity of sampling time. By using shorter lifespans, the network measures become more unstable and dependent on the time at which the observation is taken. Kossinets and Watts (2006) argued that network measures would remain stable over time. As a result, the average of the measures in a given observation period can be generalised to a longer period of time. The figure, however, suggest that, when social relationships have a lifespan, network measures are not stable. Therefore, it is difficult to infer from network snapshots stable network measures that can reflect the network structure over a longer period of time.

By allowing for the severing of ties and sampling the network structure at various times over a longer period (e.g., each day in the observation period as we did for the online social network), the validity and robustness of a network analysis could be improved.

_____________________

¹ A number of other limitations, notably validity issues, could also be introduced into the data when using archival data sources.

Want to test it with your data?

First, you need to ensure that your data confirm to the tnet standard for longitudinal networks. Then you need to load it into an R session.

Second, you need to download, install, and load tnet.

Third, by combining the add_window_to_longitudinal_data-function and the longitudinal_data_to_edgelist-function, an instantaneous structure of the network at any point in time can be created.

# Load tnet

library(tnet)

# Load the Facebook-like online social network

data(OnlineSocialNetwork.n1899)

lnet <- OnlineSocialNetwork.n1899.lnet

# Add the severing of ties after 21 days

lnet <- add_window_l(lnet, window=21)

# Create the static network on June 30, 2004, at 7am

net <- as.static.tnet(lnet[lnet[,"t"]<as.POSIXlt("2004-06-30 07:00:00"),])

# Calculate network measures, such as the global clustering coefficient

clustering_w(net)

References

Bernard, H. R., Killworth, P. D., Kronenfeld, D., Sailer, L. D., 1984. The problem of informant accuracy: the validity of retrospective data. Annual Review of Anthropology 13, 495-517.

Bernard, H. R., Kilworth, P. D., Evans, M. J., McCarty, C., Selley, G. A., 1988. Studying social relations cross-culturally. Ethnology 27 (2), 155-179.

Fararo, T. J., Sunshine, M., 1964. A Study of a Biased Friendship Network. Syracuse University Press, Syracuse, NY.

Kossinets, G., Watts, D. J., 2006. Empirical analysis of an evolving social network. Science 311, 88-90.

Uzzi, B., Spiro, J., 2005. Collaboration and creativity: The small world problem. American Journal of Sociology 111, 447-504.

12 Comments Add your own

Leave a comment

Trackback this post | Subscribe to the comments via RSS Feed

1. Amanda Traud | January 14, 2014 at 4:03 pm

Amanda Traud | January 14, 2014 at 4:03 pm

What if your network happens over an hour, could you say window=1/(24*60) and have the edges disappear every minute?

2. Tore Opsahl | January 14, 2014 at 4:12 pm

Tore Opsahl | January 14, 2014 at 4:12 pm

Hi Amanda,

Thanks for your comment. The code works by multiplying the window parameter by 60*60*24, so if the window parameter is divided by 60*24, it should work. Do inspect the output to ensure it produce the desired outcome.

Tore

3. Amanda Traud | January 14, 2014 at 4:14 pm

Amanda Traud | January 14, 2014 at 4:14 pm

Thanks tons!!

4. davidnfisher | July 15, 2014 at 1:18 pm

davidnfisher | July 15, 2014 at 1:18 pm

Hi Tore,

The link to the standards tnet requires for longitudinal data is dead, and the page on defining longitudinal networks is not finished, but I was wondering if you would be able to post a template or similar to outline how to format longitudinal network data for analysis in tnet?

Cheers!

5. Tore Opsahl | July 16, 2014 at 2:59 am

Tore Opsahl | July 16, 2014 at 2:59 am

Hi David,

Fixed the links! Let me know what you are doing with longitudinal networks.

Tore

6. davidnfisher | July 16, 2014 at 9:11 am

davidnfisher | July 16, 2014 at 9:11 am

Thats great thanks!

This may seem like a easy fix, but I can’t find a way of getting e.g. 2008-05-21 16:55:12 into “2008-05-21 16:55:12” (with quotation marks). In excel it never preserves the format of the data and just gives a number e.g. “39620.6798611111”

What I am hoping to do is take a list of pairwise dated interactions amongst a population of wild animals, and see if they have preferred associates. I aim to determine whether the associates are preferred or not if there is evidence of reinforcement/reciprocity (haven’t decided if I go for one or the other at the moment) in the formation of new links in the longitudinal network. I don’t have ties reducing in strength, so I hope to use the sliding window to remove interactions that occur 2-4 days ago (again, precise window to be decided upon).

I have location in space of the individuals as well so I aim to control for that, to see if they are doing more than just interacting with those nearest them, i.e. choosing some and not others. I also have appearance and death dates, so can account for overlap in time as well.

Will probably be coming back to you for help later on, so thanks again in advance.

David

7. davidnfisher | July 17, 2014 at 10:09 am

davidnfisher | July 17, 2014 at 10:09 am

Ok I’ve got round the problem with the date formatting it seems (well, its “06/05/2008 15:37:00”, so perhaps the date order needs to be re-arranged..) but now when I read it in it says the level sets of factors are different. The network is not symmetrical due to the way we record interactions, but it should be for analyses as its undirected. Can you symmetrise a longitudinal network?

8. Tore Opsahl | July 17, 2014 at 11:28 am

Tore Opsahl | July 17, 2014 at 11:28 am

Hi David,

To solve the date issue, have a look at as.POSIXct:

To sort the factor issue, use stringsAsFactors=FALSE when reading the data.

The network can be converted to an undirected network once you have (1) added the sliding window, add_window_l-function, and (2) created a static network, as.static.tnet-function, by using the symmetrise_w-function.

Hope this helps,

Tore

9. davidnfisher | July 17, 2014 at 12:46 pm

davidnfisher | July 17, 2014 at 12:46 pm

The date fix works fine, thanks!

However, after reading the data in I still get the same error, with and without “stringsAsFactors=FALSE” :

> clnet08 as.tnet(clnet08,type=”longitudinal tnet”)

Error in Ops.factor(net[, “i”], net[, “j”]) :

level sets of factors are different

Apologies for bugging you with these, it betrays my lack of expertise in coding more than anything

10. davidnfisher | July 17, 2014 at 12:47 pm

davidnfisher | July 17, 2014 at 12:47 pm

Sorry the code should look like this:

> clnet08 as.tnet(clnet08,type=”longitudinal tnet”)

Error in Ops.factor(net[, “i”], net[, “j”]) :

level sets of factors are different

11. davidnfisher | July 17, 2014 at 1:02 pm

davidnfisher | July 17, 2014 at 1:02 pm

ok it deletes the line where I enter the data… > clnet08<-read.table("lnet08.txt",sep="\t",stringsAsFactors=FALSE)

as.tnet(clnet08,type="longitudinal tnet")

Error in Ops.factor(net[, "i"], net[, "j"]) :

level sets of factors are different

12. Tore Opsahl | July 18, 2014 at 12:09 am

Tore Opsahl | July 18, 2014 at 12:09 am

Hi David,

Please send me an email with the data and code that you currently have. Then I will have a look at it.

Best,

Tore